Correlation analysis is a fundamental statistical tool used to study the relationship between two or more features. The term “correlation” originates from the Latin correlatio, meaning a mutual relationship. In statistics, correlation is used as a measure of association, that is, as a numerical way of describing how strongly two variables tend to vary together.

Understanding correlation is important in data science for several reasons. Correlations can help us identify potentially interesting relationships in the data, detect redundant variables, and guide feature selection in later modeling steps. They are also often a useful starting point for a deeper investigation of a system. At the same time, we have to be careful: correlation and causation are very clearly not the same.

In everyday language, we often say that two things “correlate” when they seem to go together or line up well. In statistics, however, we want something more precise: a numerical measure that tells us whether an association exists and, if so, how strong it is.

Covariance, and the (Pearson) correlation coefficient¶

Covariance

A natural starting point is the concept of variance, which measures how strongly the values of a single variable vary around their mean. If we now move from one variable to two variables, we arrive at covariance.

Covariance measures whether two variables tend to increase and decrease together. If large values of one variable tend to occur together with large values of the other, the covariance will be positive. If large values of one variable tend to occur together with small values of the other, the covariance will be negative.

The formula for covariance between two variables X and Y is given by:

where and are the individual values and and

Covariance is useful in theory, but in practice it is often difficult to interpret directly because its value depends on the scale of the variables.

Pearson Correlation Coefficient

To make the measure easier to interpret, we can normalize the covariance. This gives us the Pearson correlation coefficient, often simply called the correlation coefficient.

The Pearson Correlation Coefficient is calculated as:

The Pearson correlation coefficient is always between -1 and 1, which provides a clearer, scale-independent measure of the relationship between and . A value close to 1 indicates a strong positive linear relationship, whereas a value close to -1 indicates a strong negative linear relationship. Values close to 0 indicate little or no linear relationship.

The important word here is linear. Pearson correlation is designed to capture linear associations, not arbitrary relationships, as we will see in some of the following examples.

Source

import os

import pandas as pd

import numpy as np

from matplotlib import pyplot as plt

import seaborn as sb

# Set the ggplot style (optional)

plt.style.use("ggplot")Source

# Create some toy data

# Random number generator

rng = np.random.default_rng(seed=0)

# Genereated data

a = np.arange(0, 50)

b = a + rng.integers(-10, 10, 50)

c = a + rng.integers(-20, 25, 50)

d = rng.integers(0, 50, 50)

corr_data = pd.DataFrame({"a": a,

"b": b,

"c": c,

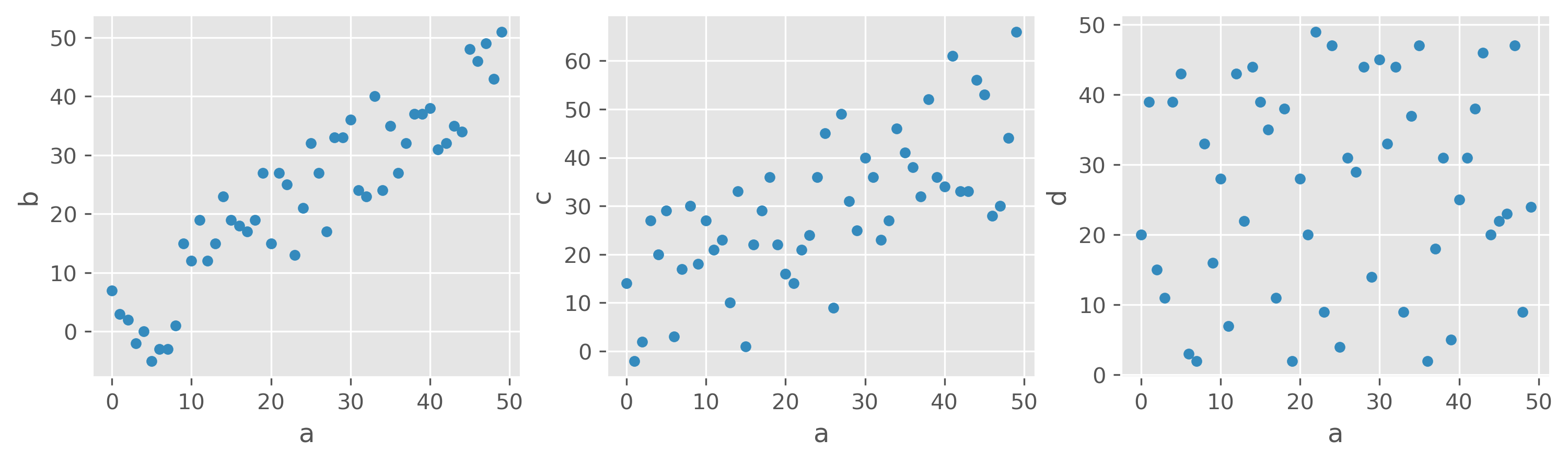

"d": d})corr_data.head(3)We created some toy data with four features: a, b, c, and d. One of the simplest ways to look for interesting relationships between variables is to plot one feature against another.

Source

fig, (ax1, ax2, ax3) = plt.subplots(1, 3, figsize=(12,3), dpi=300)

corr_data.plot(kind="scatter", x="a", y="b", ax=ax1)

corr_data.plot(kind="scatter", x="a", y="c", ax=ax2)

corr_data.plot(kind="scatter", x="a", y="d", ax=ax3)

plt.show()

What would you say, which features are correlated, and which are not?

Looking at the three scatter plots above, most people would probably agree that a and b show a strong positive relationship, a and c still show a visible but weaker one, and a and d appear largely unrelated. This already suggests that correlation should not only tell us whether a relationship exists, but also how strong it is.

For these examples, the Pearson correlation coefficients are:

Source

print(f"Corr(a, b) = {np.corrcoef(corr_data.a, corr_data.b)[1, 0]:.2f}")

print(f"Corr(a, c) = {np.corrcoef(corr_data.a, corr_data.c)[1, 0]:.2f}")

print(f"Corr(a, d) = {np.corrcoef(corr_data.a, corr_data.d)[1, 0]:.2f}")Corr(a, b) = 0.91

Corr(a, c) = 0.70

Corr(a, d) = 0.07

We therefore observe a strong positive linear correlation between a and b, a more moderate positive linear correlation between a and c,

and almost no linear correlation between a and d.

Correlation Matrix¶

For datasets with many numerical variables, it is often useful to calculate all pairwise Pearson correlations at once. The result is called a correlation matrix.

For variables a, b, and c, this matrix contains the Pearson correlation coefficients for all possible pairs:

| a | b | c | |

|---|---|---|---|

| a | |||

| b | |||

| c |

The diagonal is always 1, because every variable is perfectly correlated with itself. The off-diagonal entries are the interesting ones, because they show the strength and direction of the association between different variables.

Using pandas, we can compute such a matrix very easily:

corr_data.corr()A more realistic example

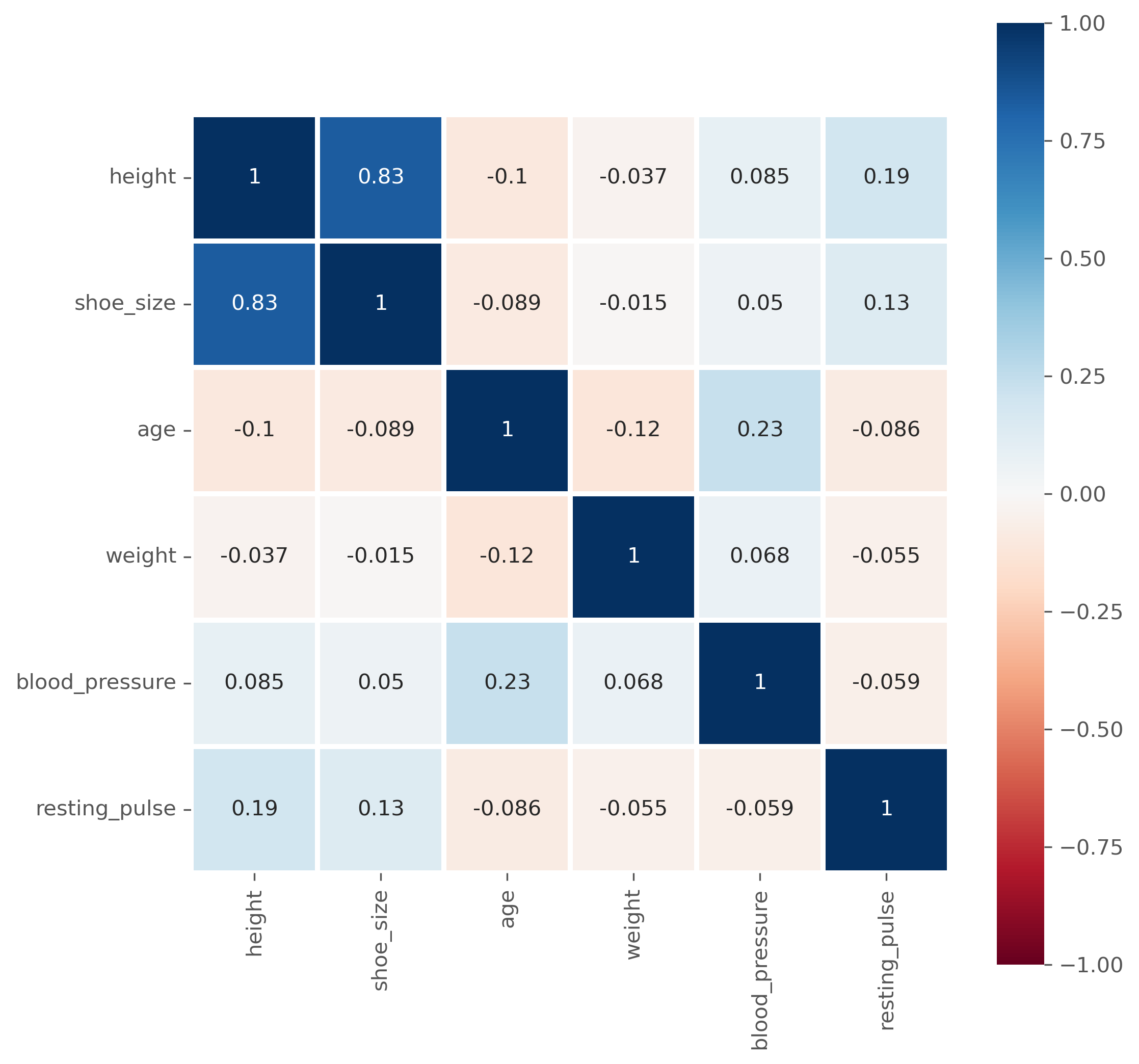

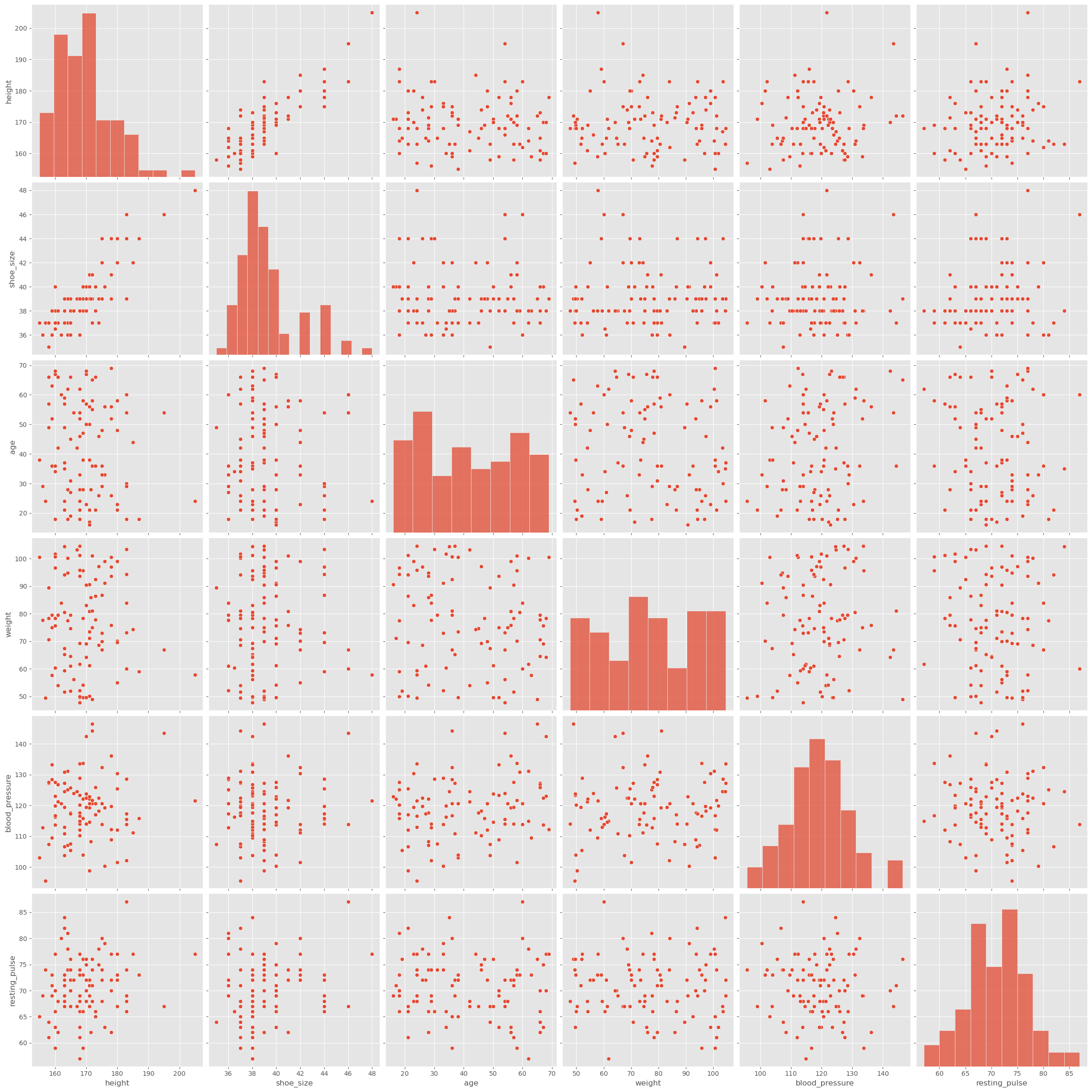

In the following, we continue to work with generated data, but now with a dataset that is at least somewhat more realistic. Suppose we want to investigate whether body-related variables show interesting correlations. We begin with a toy dataset containing variables such as height, shoe size, age, weight, blood pressure, and resting pulse.

Source

filename = r"https://raw.githubusercontent.com/florian-huber/data_science_course/main/datasets/wo_men.csv"

data_people = pd.read_csv(filename)

data_people.head()As it was introduced in the previous sections, we would often do a quick first inspection of the data using simple statistical measures. In Pandas this is very easy to do:

data_people.describe()As introduced in previous chapters, one of the first things we often do is inspect a dataset using simple summary statistics. In pandas, this is very easy.

In the table above, some values already look suspicious. For example, the minimum height is 1.63, while the maximum height is 364.0. Clearly, something is wrong here. Perhaps some entries were recorded in different units, perhaps there are input errors, or perhaps someone entered nonsense values. These are typical issues that a quick first inspection can reveal.

Before computing correlations, we therefore do a small amount of cleaning and retain only values within roughly plausible bounds.

mask = (data_people["shoe_size"] < 50) & (data_people["height"] > 100) & (data_people["height"] < 250)

data_people = data_people[mask]We can then move on to computing the correlations:

data_people.corr(numeric_only=True)Question: What does that actually mean?

Instead of as a matrix with values, the correlation matrix is often also graphically represented, especially for larger datasets, to easily spot particularly high and low coefficients.

fig, ax = plt.subplots(figsize=(8, 8), dpi=300)

sb.heatmap(

data_people.corr(numeric_only=True), # this is required for pandas >= 2.0, but also generally a good idea.

vmin=-1, vmax=1,

square=True, lw=2,

annot=True, cmap="RdBu",

ax=ax,

)

plt.show()

Limitations of the (Pearson) Correlation Measure¶

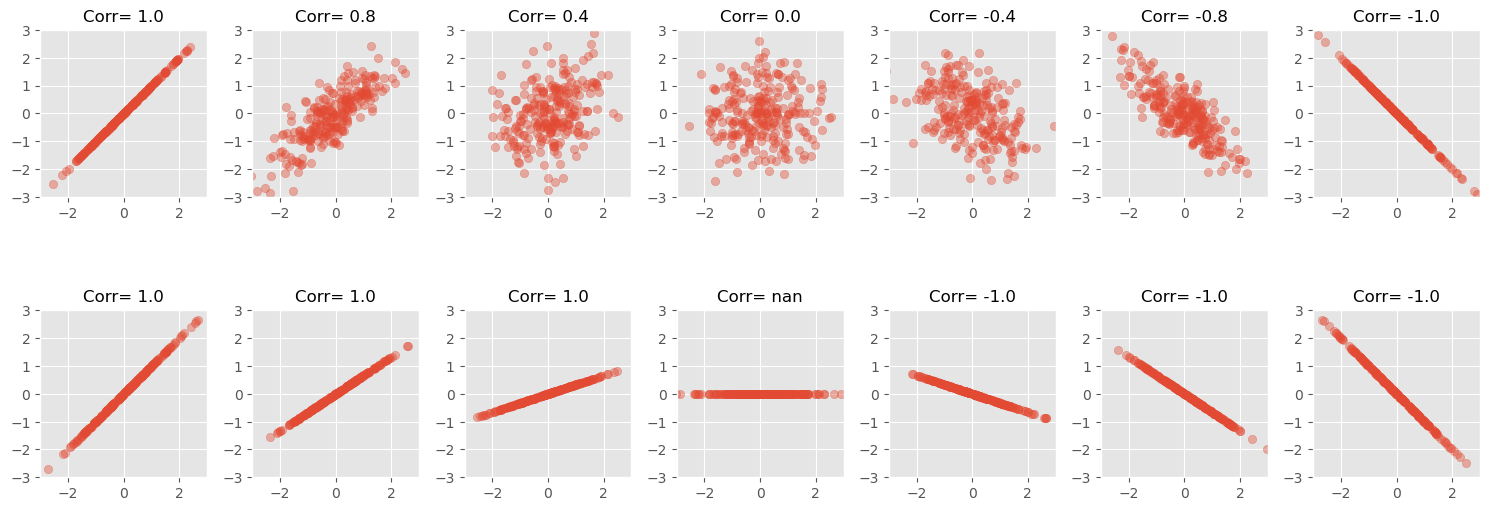

Pronounced high (or low) correlation coefficients indicate actual correlations in the data, which, in the case of the Pearson correlation, usually means that there is a clear linear dependency between two features.

This approach, however, has several limitations that can complicate the interpretation of such correlation measures. In the following, the most common pitfalls will be presented.

The slope does not determine the correlation strength¶

A strong correlation means that the data follows a clear linear pattern. But the value of the Pearson correlation coefficient does not tell us how steep that line is. It is a measure of how well the data follows a linear relationship, not of the slope of that relationship.

That is why two datasets can have very different slopes but the same correlation coefficient.

For a perfectly constant variable, the Pearson correlation is not defined, because the standard deviation is zero.

Source

NUM_POINTS = 250

# Seed for reproducibility

np.random.seed(0)

def scatter_plot(ax, x, y):

""" Create a simple scatter plot."""

ax.scatter(x, y, alpha=0.4)

# Set aspect to equal for better comparison

ax.set_aspect('equal')

ax.set_xlim([-3, 3])

ax.set_ylim([-3, 3])

def scatter_corr(ax, corr_coef, num_points=NUM_POINTS):

""" A function to create scatter plots with different correlation coefficients."""

x = np.random.normal(0, 1, num_points)

y = x * corr_coef + np.random.normal(0, np.sqrt(1 - corr_coef**2), num_points)

scatter_plot(ax, x, y)

ax.set_title(f"Corr= {corr_coef:.1f}", fontsize=12)

def scatter_linear(ax, slope, num_points=NUM_POINTS):

""" A function to create scatter plots with different correlation coefficients."""

x = np.random.normal(0, 1, num_points)

y = x * slope

scatter_plot(ax, x, y)

corr_coef = np.corrcoef(x, y)[1, 0]

ax.set_title(f"Corr= {corr_coef:.1f}", fontsize=12)

# Loop through the correlation coefficients, creating two scatter plots for each

correlation_coefficients = [1, 0.8, 0.4, 0, -0.4, -0.8, -1]

slopes = [1, 2/3, 1/3, 0, -1/3, -2/3, -1]

fig, axes = plt.subplots(2, len(correlation_coefficients), figsize=(15, 6))

for i, coef in enumerate(correlation_coefficients):

scatter_corr(axes[0, i], coef)

for i, slope in enumerate(slopes):

scatter_linear(axes[1, i], slope)

fig.tight_layout()

plt.show()C:\Users\flori\anaconda3\envs\data_science\Lib\site-packages\numpy\lib\_function_base_impl.py:3023: RuntimeWarning: invalid value encountered in divide

c /= stddev[:, None]

C:\Users\flori\anaconda3\envs\data_science\Lib\site-packages\numpy\lib\_function_base_impl.py:3024: RuntimeWarning: invalid value encountered in divide

c /= stddev[None, :]

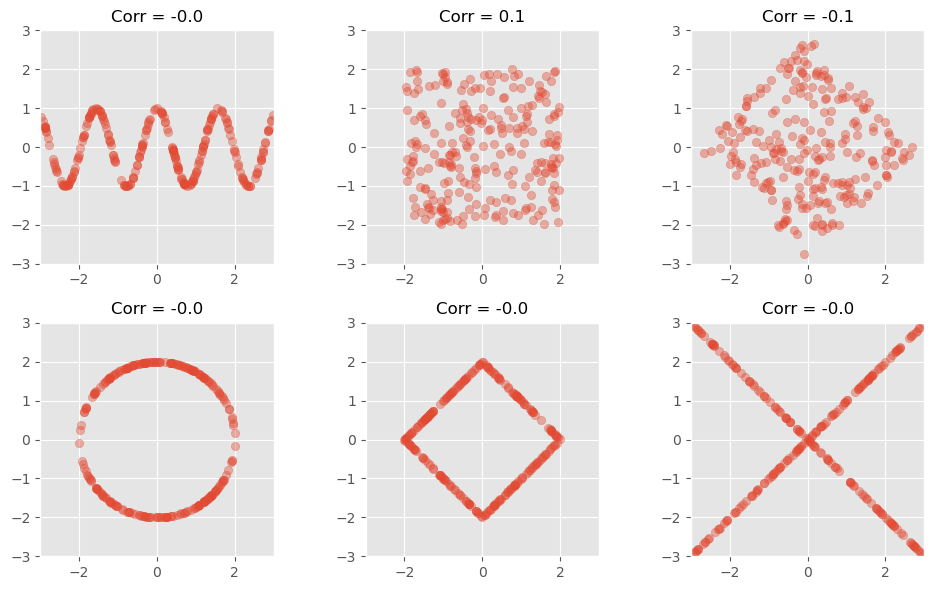

Pearson correlation detects linear relationships only¶

A high positive or negative Pearson correlation indicates a clear linear relationship. But a low Pearson correlation is much harder to interpret. Most importantly, a low Pearson correlation does not mean that there is no meaningful relationship between two variables.

The following figure shows several datasets with visible structure, but little or no Pearson correlation.

Source

NUM_POINTS = 250

# Seed for reproducibility

np.random.seed(0)

# Define a function to create scatter plots with non-linear relationships

def scatter_nonlinear(ax, pattern):

# Generate x values

x = np.random.uniform(-3, 3, NUM_POINTS)

if pattern == 'wave':

y = np.cos(x*4)

elif pattern == 'square':

y = np.random.uniform(-3, 3, NUM_POINTS)

x *= 2/3

y *= 2/3

elif pattern == 'square_rotated':

y = np.random.uniform(-3, 3, NUM_POINTS)

points = np.vstack([2/3*x, 2/3*y])

theta = np.radians(45)

c, s = np.cos(theta), np.sin(theta)

rotation_matrix = np.array([[c, -s], [s, c]])

rotated_points = rotation_matrix.dot(points)

(x, y) = rotated_points

elif pattern == 'circle':

x = np.random.uniform(-1, 1, NUM_POINTS)

y = np.sqrt(1 - x**2) * np.random.choice([1, -1], NUM_POINTS)

x *= 2

y *= 2

elif pattern == 'diamond':

y = np.random.uniform(-3, 3, NUM_POINTS)

y = np.sign(y) * (3 - np.abs(x))

x *= 2/3

y *= 2/3

elif pattern == 'x':

y = np.abs(x) * np.random.choice([1, -1], NUM_POINTS)

# Create scatter plot

scatter_plot(ax, x, y)

corr_coef = np.corrcoef(x, y)[1, 0]

ax.set_title(f"Corr = {corr_coef:.1f}", fontsize=12)

# Define the patterns to be visualized

patterns1 = ['wave', 'square', 'square_rotated']

patterns2 = ['circle', 'diamond', 'x']

fig, axes = plt.subplots(2, 3, figsize=(10, 6))

for i, pattern in enumerate(patterns1):

scatter_nonlinear(axes[0, i], pattern)

for i, pattern in enumerate(patterns2):

scatter_nonlinear(axes[1, i], pattern)

fig.tight_layout()

plt.show()

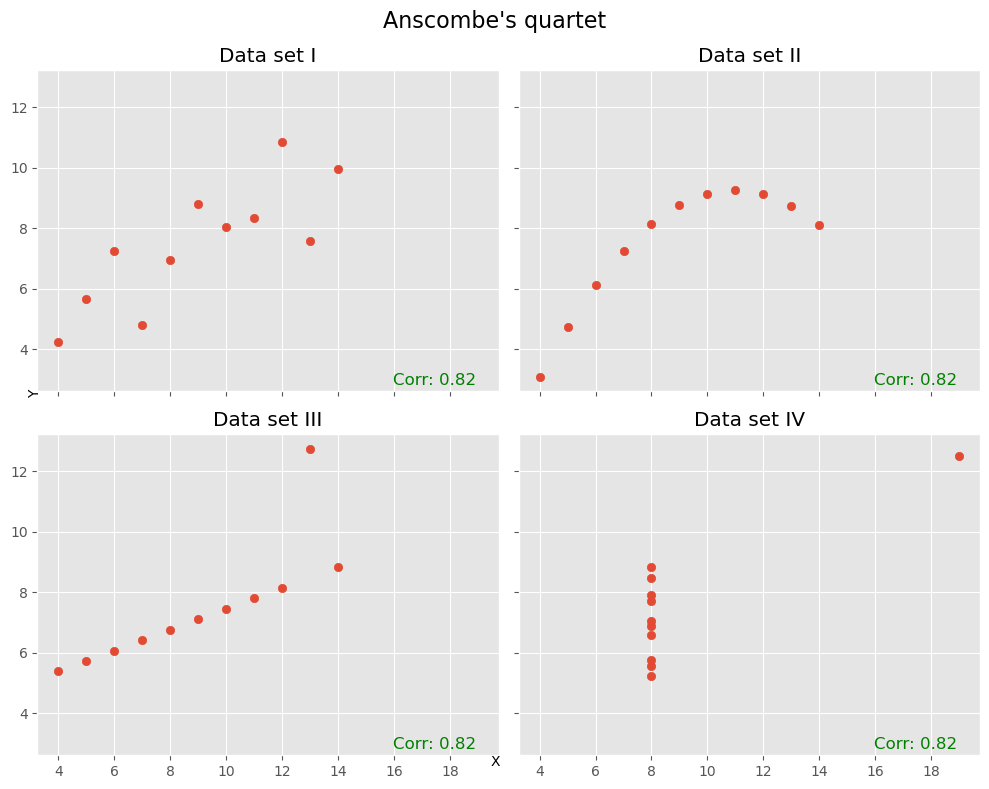

Another prominent example to illustrate how little the Pearson correlation coefficient sometimes tells us is the datasaurus dataset from the last chapter. Here, too, the data is distributed very differently, but the correlation coefficient remains the same.

Pearson correlation is sensitive to outliers¶

Another famous illustration of the limitations of correlation is Anscombe’s quartet Anscombe (1973). These four datasets have very similar summary statistics and almost identical Pearson correlations, yet their actual distributions look very different. Among other things, this example shows how strongly correlation can be influenced by a small number of unusual points.

Source

# Defining the Anscombe's quartet dataset

anscombe_data = {

'I': {

'x': [10.0, 8.0, 13.0, 9.0, 11.0, 14.0, 6.0, 4.0, 12.0, 7.0, 5.0],

'y': [8.04, 6.95, 7.58, 8.81, 8.33, 9.96, 7.24, 4.26, 10.84, 4.82, 5.68]

},

'II': {

'x': [10.0, 8.0, 13.0, 9.0, 11.0, 14.0, 6.0, 4.0, 12.0, 7.0, 5.0],

'y': [9.14, 8.14, 8.74, 8.77, 9.26, 8.10, 6.13, 3.10, 9.13, 7.26, 4.74]

},

'III': {

'x': [10.0, 8.0, 13.0, 9.0, 11.0, 14.0, 6.0, 4.0, 12.0, 7.0, 5.0],

'y': [7.46, 6.77, 12.74, 7.11, 7.81, 8.84, 6.08, 5.39, 8.15, 6.42, 5.73]

},

'IV': {

'x': [8.0, 8.0, 8.0, 8.0, 8.0, 8.0, 8.0, 19.0, 8.0, 8.0, 8.0],

'y': [6.58, 5.76, 7.71, 8.84, 8.47, 7.04, 5.25, 12.50, 5.56, 7.91, 6.89]

}

}

# Plotting the Anscombe's quartet

fig, axs = plt.subplots(2, 2, figsize=(10, 8), sharex=True, sharey=True)

axs = axs.flatten() # To make it easier to iterate over

# Calculating the correlation coefficient for each dataset

correlation_coefficients = []

for i, (key, data) in enumerate(anscombe_data.items()):

axs[i].scatter(data['x'], data['y'])

axs[i].set_title(f"Data set {key}")

corr_coef = np.corrcoef(data['x'], data['y'])[0, 1]

correlation_coefficients.append(corr_coef)

axs[i].text(0.95, 0.01, f'Corr: {corr_coef:.2f}', verticalalignment='bottom', horizontalalignment='right',

transform=axs[i].transAxes, color='green', fontsize=12)

fig.text(0.5, 0.04, 'X', ha='center', va='center')

fig.text(0.04, 0.5, 'Y', ha='center', va='center', rotation='vertical')

plt.suptitle("Anscombe's quartet", fontsize=16)

plt.tight_layout()

plt.show()

Looking beyond a single number¶

As with many summary statistics, correlation coefficients can be useful, but they can also hide important structure. This is why it is often a good idea not to rely on a single number alone. Whenever possible, we should also inspect the actual distributions and scatter plots.

If there are not too many variables, tools such as pairplot() from seaborn can be very helpful.

sb.pairplot(data_people, height=4)

plt.show()

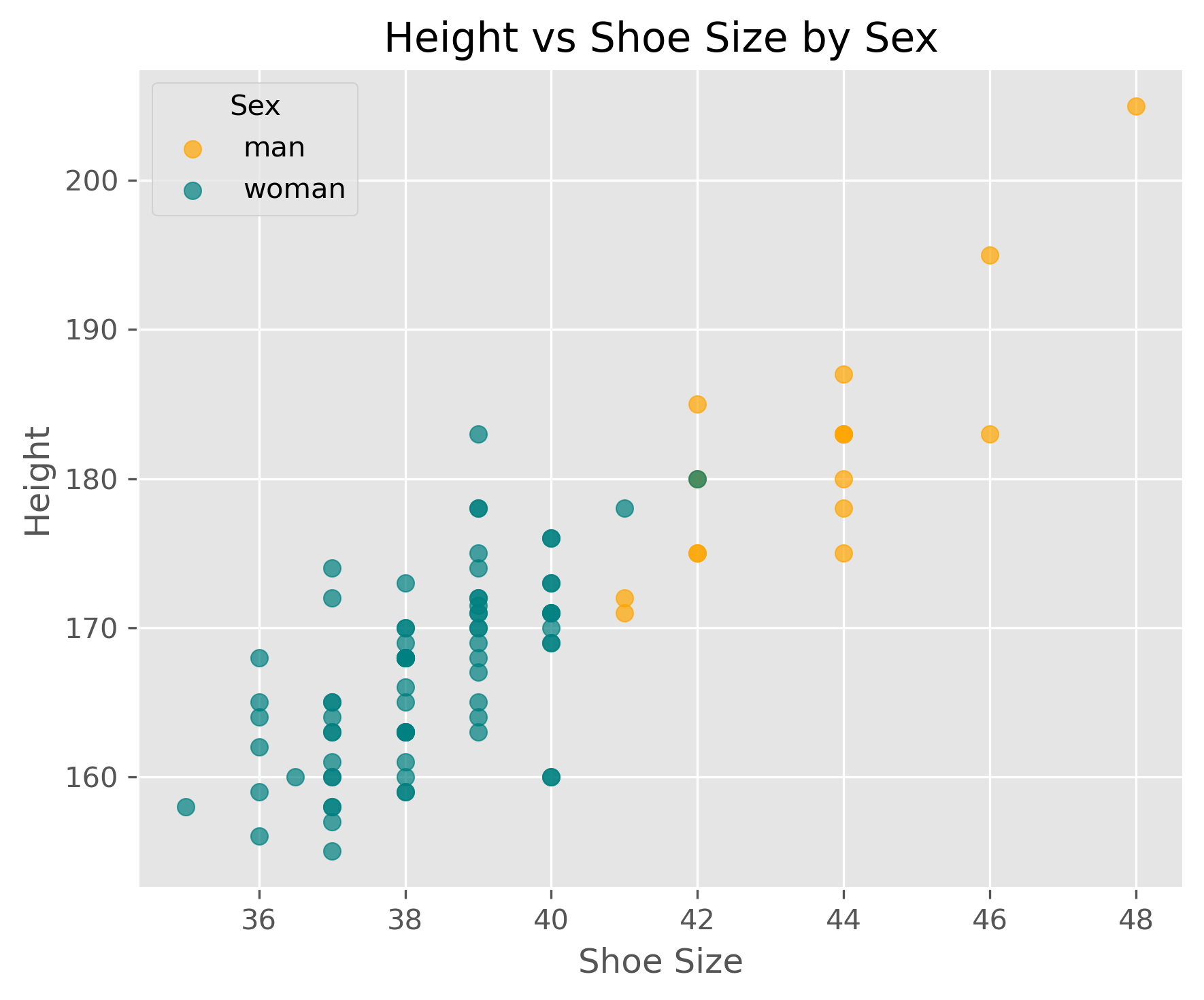

It is of course also easily possible to plot only the parts we are most interested in, for instance:

Source

fig, ax = plt.subplots(figsize=(6, 5), dpi=300)

# plot men

men = data_people[data_people.sex == "man"]

ax.scatter(men.shoe_size, men.height, c="orange", label="man", alpha=0.7)

# plot women

women = data_people[data_people.sex == "woman"]

ax.scatter(women.shoe_size, women.height, c="teal", label="woman", alpha=0.7)

ax.legend(title="Sex")

ax.set_xlabel("Shoe Size")

ax.set_ylabel("Height")

ax.set_title("Height vs Shoe Size by Sex")

plt.tight_layout()

plt.show()

What Does a Correlation Tell Us?¶

If we discover a strong correlation in our data, what does that actually tell us?

First, it tells us that two variables share information. If the correlation is very high, then knowing one variable already tells us a lot about the other. In some cases, this is useful. In other cases, it means that one of the variables may be redundant.

A correlation of 1.0 or -1.0 is not necessarily exciting. In practice, such perfect correlations often arise when two variables describe essentially the same thing in different forms. Typical examples are temperature in Celsius and Kelvin, or the diameter and circumference of a circle. In such cases, the correlation mainly tells us that one of the variables may be unnecessary.

More generally, correlations can be useful in at least two ways. Sometimes the correlation itself is already enough for the task at hand. In other cases, it is only a first clue that motivates deeper investigation.

Correlation vs. Causality¶

Searching for correlations is often a useful early step in data analysis. But why? What can we actually do with a discovered correlation?

The answer depends on the question we are trying to answer. In some cases, a correlation is already useful on its own. Suppose an online store finds that customers who buy item X also often buy item Y. Even without understanding the deeper reason behind this relationship, the store may already be able to use this information for recommendations, product placement, or inventory planning.

In other cases, however, the correlation is only the beginning. Then we want to know why two variables are related. Is one influencing the other? Is there a third factor affecting both? Or is the observed relationship perhaps just accidental?

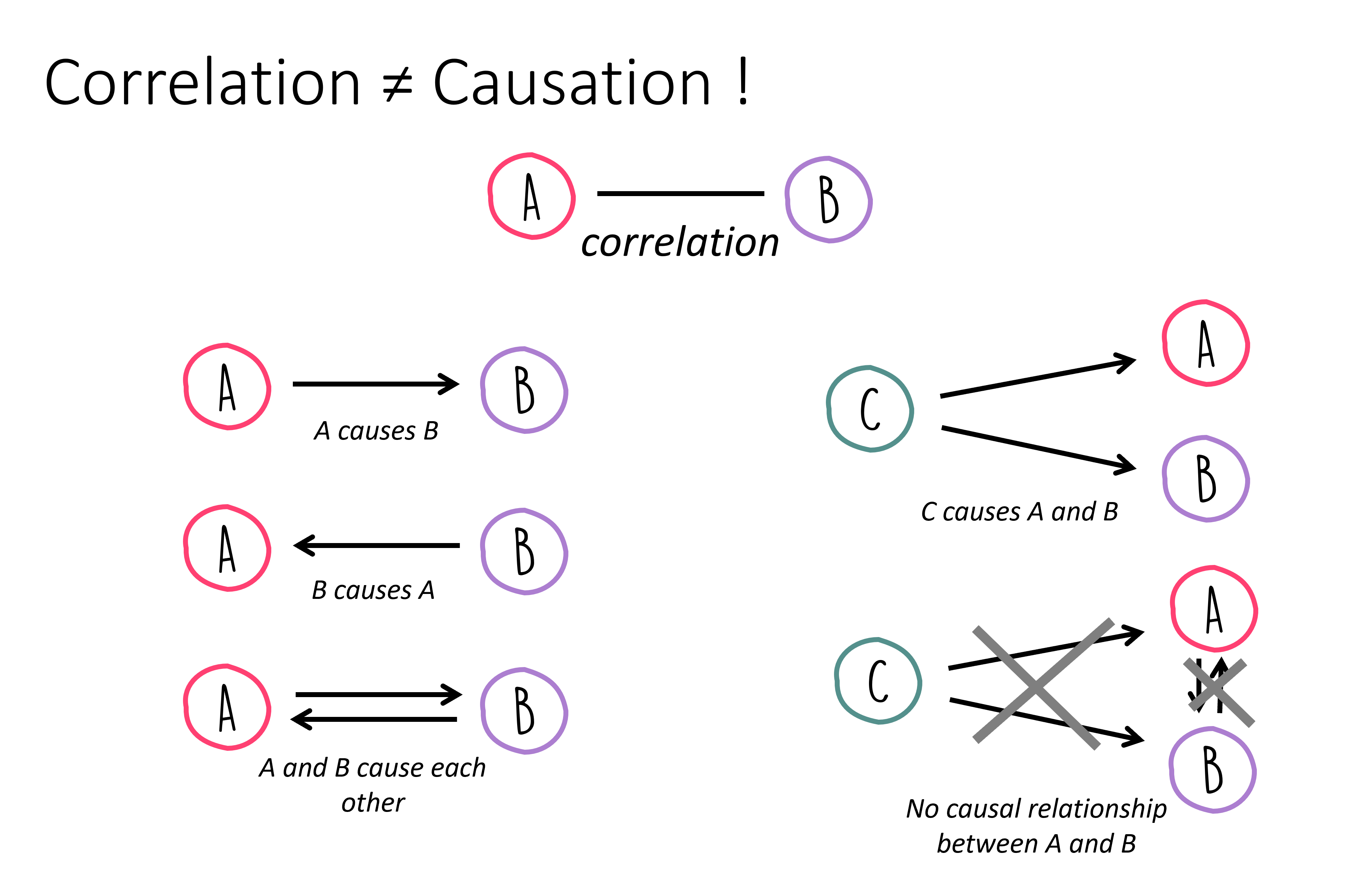

This brings us to the distinction between correlation and causality. A correlation means that two variables are statistically associated. A causal relationship means that one variable has an effect on the other.

These two ideas are not the same.

In everyday life, people often jump quickly from correlation to causation. For example:

“I ate fruit from tree B and then fell ill.”

“I took medicine X, and an hour later I felt better.”

In both instances, we essentially only observe a correlation, yet we often tend to quickly infer a causal link. But from correlation alone, we cannot yet conclude that one event caused the other. Perhaps something else caused the illness. Perhaps the person would have improved anyway, even without the medicine.

This is why the phrase “correlation is not causation” is so important.

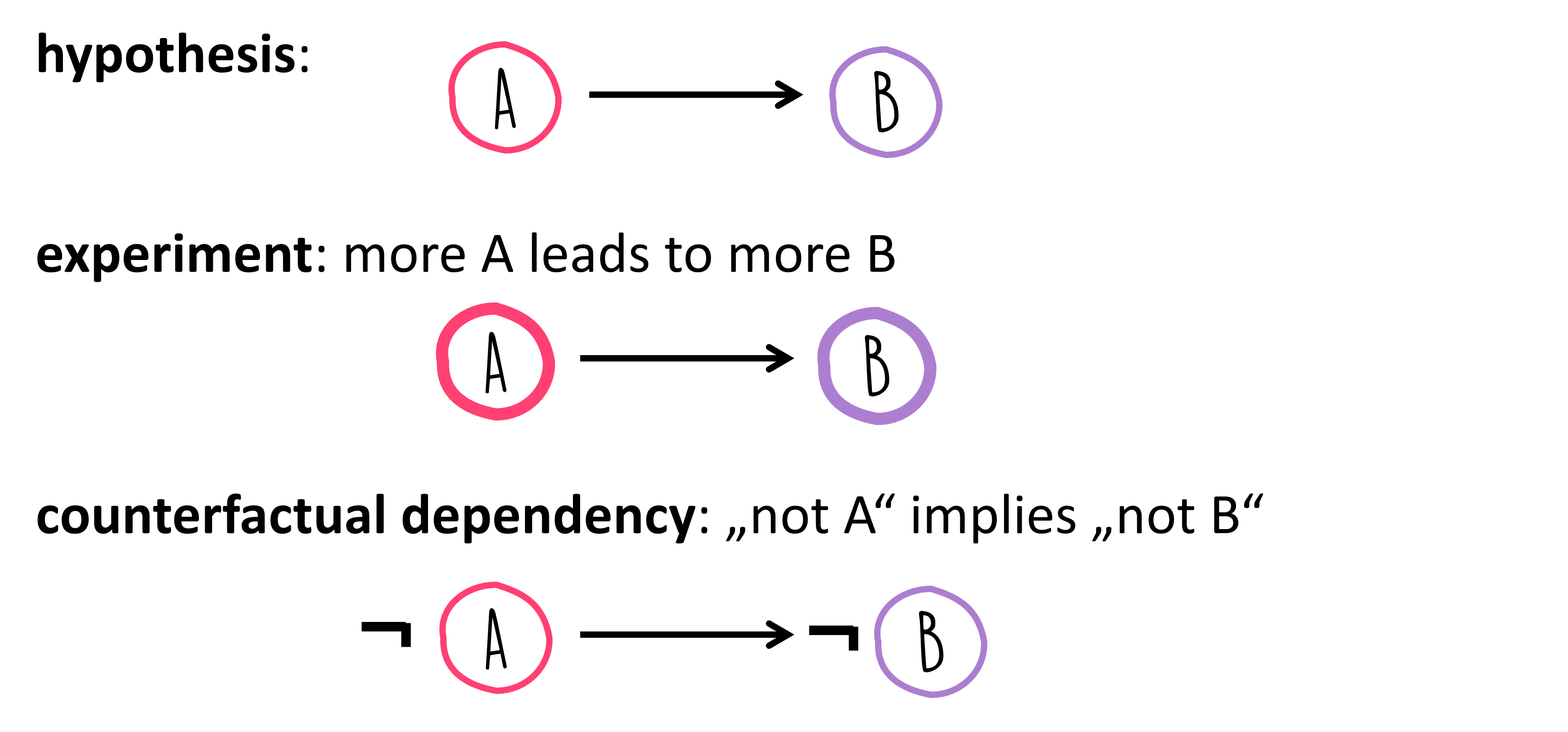

If two variables and are correlated, many different situations may be possible:

So a correlation is often best understood as a clue, not as proof of a causal relationship.

How, then, can we move from correlation to causality?

There is no single universal recipe. In many cases, causal questions require much more than observational data alone. Carefully designed experiments, interventions, background knowledge, and strong theoretical reasoning often play a central role. For example, if we suspect that causes , we may ask whether changing or removing also changes , while other relevant factors are controlled for.

This is one reason why causal inference is a major topic of its own, far beyond the scope of this introductory chapter. For now, the key lesson is this: Correlation can point us toward interesting relationships, but it does not by itself tell us why those relationships exist.

That is exactly why correlation analysis is both powerful and dangerous: powerful because it helps us find patterns quickly, and dangerous because it tempts us to overinterpret them.

Correlation analysis is a simple but powerful tool for exploring relationships between variables. It can help us identify patterns, detect redundancy, and generate hypotheses for further investigation. At the same time, correlation must be interpreted with care. Pearson correlation measures linear association only, can be strongly influenced by outliers, and tells us nothing directly about causality.

More on Correlations!¶

The Pearson correlation coefficient is an important and very widely used measure, but it is by no means the only way to describe relationships between variables. In particular, Pearson correlation is mainly suited for linear relationships between numerical variables and can be quite sensitive to outliers.

A very important alternative is the Spearman rank correlation coefficient. Instead of working directly with the raw numerical values, Spearman correlation first replaces the values by their ranks and then computes the Pearson correlation on those ranks. This makes it especially useful when variables are related in a monotonic but not necessarily linear way. In simpler words: if one variable tends to increase whenever the other increases, even if not along a straight line, Spearman correlation can often detect that relationship better than Pearson correlation. It is also often more robust when the data contains outliers or when exact distances between values are less meaningful than their ordering.

If and denote the ranks of the values of and , then Spearman correlation is simply the Pearson correlation of those ranks:

In the special case where there are no tied ranks, Spearman’s rho can also be written as:

where is the difference between the two ranks of observation .

Closely related is Kendall’s tau, another rank-based correlation measure. Kendall’s tau is based on the idea of concordant and discordant pairs. A pair of observations is called concordant if the order of the two observations is the same in both variables. For example, if person A has both a higher value in variable X and a higher value in variable Y than person B, the pair is concordant. If the order goes in opposite directions, the pair is discordant. Kendall’s tau summarizes how often such agreements and disagreements occur.

In rough terms:

Pearson correlation measures linear relationships.

Spearman correlation measures whether variables are related in a consistently increasing or decreasing order.

Kendall’s tau also focuses on rank agreement, but through concordant and discordant pairs.

In Python, pandas can compute all three directly through the .corr() method by choosing the appropriate method. The current pandas documentation lists 'pearson', 'kendall', and 'spearman' as supported options.

#data_people.corr(numeric_only=True, method="pearson")

#data_people.corr(numeric_only=True, method="spearman")

data_people.corr(numeric_only=True, method="kendall")If you want to compute the correlation between two specific variables, scipy.stats also provides dedicated functions. SciPy offers spearmanr() for Spearman correlation and kendalltau() for Kendall’s tau. Both functions can also return p-values for testing against the null hypothesis of no association.

from scipy.stats import spearmanr, kendalltau

# Example: correlation between height and shoe_size

rho, p_s = spearmanr(data_people["height"], data_people["shoe_size"])

tau, p_k = kendalltau(data_people["height"], data_people["shoe_size"])

print(f"Spearman rho = {rho:.3f}, p-value = {p_s:.3g}")

print(f"Kendall tau = {tau:.3f}, p-value = {p_k:.3g}")Spearman rho = 0.778, p-value = 3.11e-20

Kendall tau = 0.644, p-value = 2.11e-17

A very good habit in practice is this: if you suspect that a relationship may not be linear, or if your data contains outliers or ordinal variables, do not look at Pearson correlation alone.

More to read

Freely available books that also cover a lot on correlations:

“Introduction to Statistics and Data Analysis” by Christian Heumann and Michael Schomaker Shalabh, Heumann et al. (2022) link to pdf, this book contains code in R that can easily be converted to Python examples.

“Statistical Methods for Data Analysis” by Luca Lista, Lista (2023) link to pdf

David Lane, “Introduction to Statistics”, Lane et al. (2003), https://

open .umn .edu /opentextbooks /textbooks /459

- Anscombe, F. J. (1973). Graphs in Statistical Analysis. The American Statistician, 27(1), 17–21. 10.1080/00031305.1973.10478966

- Heumann, C., Schomaker, M., & Shalabh. (2022). Introduction to Statistics and Data Analysis: With Exercises, Solutions and Applications in R. Springer. 10.1007/978-3-319-46162-5.pdf

- Lista, L. (2023). Statistical Methods for Data Analysis: With Applications in Particle Physics. Springer. 10.1007/978-3-031-19934-9.pdf

- Lane, D., Scott, D., Hebl, M., Guerra, R., Osherson, D., & Zimmer, H. (2003). Introduction to Statistics. Citeseer. https://open.umn.edu/opentextbooks/textbooks/459